Introduction and review

Last month I talked about van der Bergh et al’s work on the precision of sensory evidence, which introduced the idea of a trapped prior. I think this concept has far-reaching implications for the rationalist project as a whole. I want to re-derive it, explain it more intuitively, then talk about why it might be relevant for things like intellectual, political and religious biases.

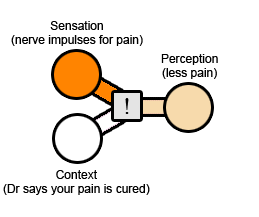

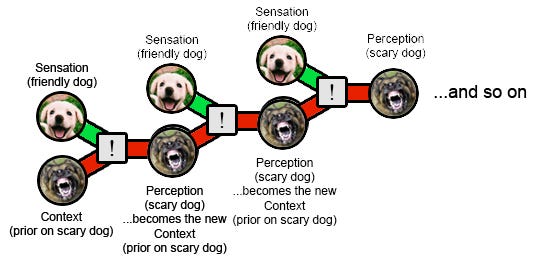

To review: the brain combines raw experience (eg sensations, memories) with context (eg priors, expectations, other related sensations and memories) to produce perceptions. You don’t notice this process; you are only able to consciously register the final perception, which feels exactly like raw experience.

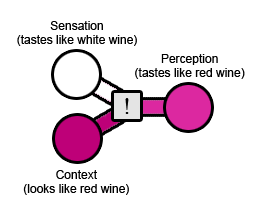

Or: maybe you feel like you are using a particular context independent channel (eg hearing). Unbeknownst to you, the information in that channel is being context-modulated by the inputs of a different channel (eg vision). You don’t feel like “this is what I’m hearing, but my vision tells me differently, so I’ll compromise”. You feel like “this is exactly what I heard, with my ears, in a way vision didn’t affect at all”.

The most basic illusion I know of is the Wine Illusion; dye a white wine red, and lots of people will say it tastes like red wine. The raw experience - the taste of the wine itself - is that of a white wine. But the context is that you're drinking a red liquid. Result: it tastes like a red wine.

The placebo effect is almost equally simple. You're in pain, so your doctor gives you a “painkiller” (unbeknownst to you, it’s really a sugar pill). The raw experience is the nerve sending out just as many pain impulses as before. The context is that you've just taken a pill which a doctor assures you will make you feel better. Result: you feel less pain.

The cognitive version of this experience is normal Bayesian reasoning. Suppose you live in an ordinary California suburb and your friend says she saw a coyote on the way to work. You believe her; your raw experience (a friend saying a thing) and your context (coyotes are plentiful in your area) add up to more-likely-than-not. But suppose your friend says she saw a polar bear on the way to work. Now you're doubtful; the raw experience (a friend saying a thing) is the same, but the context (ie the very low prior on polar bears in California) makes it implausible.

Normal Bayesian reasoning slides gradually into confirmation bias. Suppose you are a zealous Democrat. Your friend makes a plausible-sounding argument for a Democratic position. You believe it; your raw experience (an argument that sounds convincing) and your context (the Democrats are great) add up to more-likely-than-not true. But suppose your friend makes a plausible-sounding argument for a Republican position. Now you're doubtful; the raw experience (a friend making an argument with certain inherent plausibility) is the same, but the context (ie your very low prior on the Republicans being right about something) makes it unlikely.

Still, this ought to work eventually. Your friend just has to give you a good enough argument. Each argument will do a little damage to your prior against Republican beliefs. If she can come up with enough good evidence, you have to eventually accept reality, right?

But in fact many political zealots never accept reality. It's not just that they're inherently skeptical of what the other party says. It's that even when something is proven beyond a shadow of a doubt, they still won't believe it. This is where we need to bring in the idea of trapped priors.

Trapped priors: the basic cognitive version

Phobias are a very simple case of trapped priors. They can be more technically defined as a failure of habituation, the fancy word for "learning a previously scary thing isn't scary anymore". There are lots of habituation studies on rats. You ring a bell, then give the rats an electric shock. After you do this enough times, they're scared of the bell - they run and cower as soon as they hear it. Then you switch to ringing the bell and not giving an electric shock. At the beginning, the rats are still scared of the bell. But after a while, they realize the bell can't hurt them anymore. They adjust to treating it just like any other noise; they lose their fear - they habituate.

The same thing happens to humans. Maybe a big dog growled at you when you were really young, and for a while you were scared of dogs. But then you met lots of friendly cute puppies, you realized that most dogs aren't scary, and you came to some reasonable conclusion like "big growly dogs are scary but cute puppies aren't."

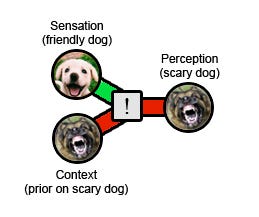

Some people never manage to do this. They get cynophobia, pathological fear of dogs. In its original technical use, a phobia is an intense fear that doesn't habituate. No matter how many times you get exposed to dogs without anything bad happening, you stay afraid. Why?

In the old days, psychologists would treat phobia by flooding patients with the phobic object. Got cynophobia? We'll stick you in a room with a giant Rottweiler, lock the door, and by the time you come out maybe you won't be afraid of dogs anymore. Sound barbaric? Maybe so, but more important it didn't really work. You could spend all day in the room with the Rottweiler, the Rottweiler could fall asleep or lick your face or do something else that should have been sufficient to convince you it wasn't scary, and by the time you got out you'd be even more afraid of dogs than when you went in.

Nowadays we're a little more careful. If you've got cynophobia, we'll start by making you look at pictures of dogs - if you're a severe enough case, even the pictures will make you a little nervous. Once you've looked at a zillion pictures, gotten so habituated to looking at pictures that they don't faze you at all, we'll put you in a big room with a cute puppy in a cage. You don't have to go near the puppy, you don't have to touch the puppy, just sit in the room without freaking out. Once you've done that a zillion times and lost all fear, we'll move you to something slightly doggier and scarier, than something slightly doggier and scarier than that, and so on, until you're locked in the room with the Rottweiler.

It makes sense that once you're exposed to dogs a million times and it goes fine and everything's okay, you lose your fear of dogs - that's normal habituation. But now we're back to the original question - how come flooding doesn't work? Forgetting the barbarism, how come we can't just start with the Rottweiler?

The common-sense answer is that you only habituate when an experience with a dog ends up being safe and okay. But being in the room with the Rottweiler is terrifying. It's not a safe okay experience. Even if the Rottweiler itself is perfectly nice and just sits calmly wagging its tail, your experience of being locked in the room is close to peak horror. Probably your intellect realizes that the bad experience isn't the Rottweiler's fault. But your lizard brain has developed a stronger association than before between dogs and unpleasant experiences. After all, you just spent time with a dog and it was a really unpleasant experience! Your fear of dogs increases.

(How does this feel from the inside? Less-self-aware patients will find their prior coloring every aspect of their interaction with the dog. Joyfully pouncing over to get a headpat gets interpreted as a vicious lunge; a whine at not being played with gets interpreted as a murderous growl, and so on. This sort of patient will leave the room saying 'the dog came this close to attacking me, I knew all dogs were dangerous!' More self-aware patients will say something like "I know deep down that dogs aren't going to hurt me, I just know that whenever I'm with a dog I'm going to have a panic attack and hate it and be miserable the whole time". Then they'll go into the room, have a panic attack, be miserable, and the link between dogs and misery will be even more cemented in their mind.)

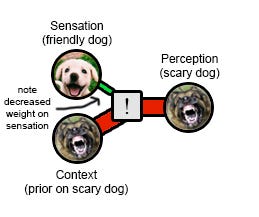

The more technical version of this same story is that habituation requires a perception of safety, but (like every other perception) this one depends on a combination of raw evidence and context. The raw evidence (the Rottweiler sat calmly wagging its tail) looks promising. But the context is a very strong prior that dogs are terrifying. If the prior is strong enough, it overwhelms the real experience. Result: the Rottweiler was terrifying. Any update you make on the situation will be in favor of dogs being terrifying, not against it!

This is the trapped prior. It's trapped because it can never update, no matter what evidence you get. You can have a million good experiences with dogs in a row, and each one will just etch your fear of dogs deeper into your system. Your prior fear of dogs determines your present experience, which in turn becomes the deranged prior for future encounters.

Trapped prior: the more complicated emotional version

The section above describes a simple cognitive case for trapped priors. It doesn't bring in the idea of emotion at all - an emotionless threat-assessment computer program could have the same problem if it used the same kind of Bayesian reasoning people do. But people find themselves more likely to be biased when they have strong emotions. Why?

Van der Bergh et al suggest that when experience is too intolerable, your brain will decrease bandwidth on the "raw experience" channel to protect you from the traumatic emotions. This is why some trauma victims' descriptions of their traumas are often oddly short, un-detailed, and to-the-point. This protects the victim from having to experience the scary stimuli and negative emotions in all their gory details. But it also ensures that context (and not the raw experience itself) will play the dominant role in determining their perception of an event.

You can't update on the evidence that the dog was friendly because your raw experience channel has become razor-thin; your experience is based almost entirely on your priors about what dogs should be like.

I've heard some people call this "bitch eating cracker syndrome". The idea is - you're in an abusive or otherwise terrible relationship. Your partner has given you ample reason to hate them. But now you don't just hate them when they abuse you. Now even something as seemingly innocent as seeing them eating crackers makes you actively angry. In theory, an interaction with your partner where they just eat crackers and don't bother you in any way ought to produce some habituation, be a tiny piece of evidence that they're not always that bad. In reality, it will just make you hate them worse. At this point, your prior on them being bad is so high that every single interaction, regardless of how it goes, will make you hate them more. Your prior that they're bad has become trapped. And it colors every aspect of your interaction with them, so that even interactions which out-of-context are perfectly innocuous feel nightmarish from the inside.

From phobia to bias

I think this is a fruitful way to think of cognitive biases in general. If I'm a Republican, I might have a prior that Democrats are often wrong or lying or otherwise untrustworthy. In itself, that's fine and normal. It's a model shaped by my past experiences, the same as my prior against someone’s claim to have seen a polar bear. But if enough evidence showed up - bear tracks, clumps of fur, photographs - I should eventually overcome my prior and admit that the bear people had a point. Somehow in politics that rarely seems to happen.

For example, more scientifically literate people are more likely to have partisan positions on science (eg agree with their own party's position on scientifically contentious issues, even when outsiders view it as science-denialist). If they were merely biased, they should start out wrong, but each new fact they learn about science should make them update a little toward the correct position. That's not what we see. Rather, they start out wrong, and each new fact they learn, each unit of effort they put into becoming more scientifically educated, just makes them wronger. That's not what you see in normal Bayesian updating. It's a sign of a trapped prior.

Political scientists have traced out some of the steps of how this happens, and it looks a lot like the dog example: zealots' priors determine what information they pay attention to, then distorts their judgment of that information.

So for example, in 1979 some psychologists asked partisans to read pairs of studies about capital punishment (a controversial issue at the time), then asked them to rate the methodologies on a scale from -8 to 8. Conservatives rated the pro-punishment study at about +2 and the anti-execution study as about -2; liberals gave an only slightly smaller difference the opposite direction. Of course, the psychologists had designed the studies to be about equally good, and even switched the conclusion of each study from subject to subject to average out any remaining real difference in study quality. At the end of reading the two studies, both the liberal and conservative groups reported believing that the evidence had confirmed their position, and described themselves as more certain than before that they were right. The more information they got on the details of the studies, the stronger their belief.

This pattern - increasing evidence just making you more certain of your preexisting belief, regardless of what it is - is pathognomonic of a trapped prior. These people are doomed.

I want to tie this back to one of my occasional hobbyhorses - discussion of "dog whistles". This is the theory that sometimes politicians say things whose literal meaning is completely innocuous, but which secretly convey reprehensible views, in a way other people with those reprehensible views can detect and appreciate. For example, in the 2016 election, Ted Cruz said he was against Hillary Clinton's "New York values". This sounded innocent - sure, people from the Heartland think big cities have a screwed-up moral compass. But various news sources argued it was actually Cruz's way of signaling support for anti-Semitism (because New York = Jews). Since then, almost anything any candidate from any party says has been accused of being a dog-whistle for something terrible - for example, apparently Joe Biden's comments about Black Lives Matter were dog-whistling his support for rioters burning down American cities.

Maybe this kind of thing is real sometimes. But think about how it interacts with a trapped prior. Whenever the party you don't like says something seemingly reasonable, you can interpret in context as them wanting something horrible. Whenever they want a seemingly desirable thing, you secretly know it means they want a horrible moral atrocity. If a Republican talks about "law and order", it doesn't mean they're concerned about the victims of violent crime, it means they want to lock up as many black people as possible to strike a blow for white supremacy. When a Democrat talks about "gay rights", it doesn't mean letting people marry the people they love, it means destroying the family so they can replace it with state control over your children. I've had arguments with people who believe that no pro-life conservative really cares about fetuses, they just want to punish women for being sluts by denying them control over their bodies. And I've had arguments with people who believe that no pro-lockdown liberal really cares about COVID deaths, they just like the government being able to force people to wear masks as a sign of submission. Once you're at the point where all these things sound plausible, you are doomed. You can get a piece of evidence as neutral as "there's a deadly pandemic, so those people think you should wear a mask" and convert it into "they're trying to create an authoritarian dictatorship". And if someone calls you on it, you'll just tell them they need to look at it in context. It’s the bitch eating cracker syndrome except for politics - even when the other party does something completely neutral, it seems like extra reason to hate them.

Reiterating the cognitive vs. emotional distinction

When I showed some people an early draft of this article, they thought I was talking about "emotional bias". For example, the phobic patient fears the dog, so his anti-dog prior stays trapped. The partisan hates the other party, so she can't update about it normally.

While this certainly happens, I'm trying to make a broader point. The basic idea of a trapped prior is purely epistemic. It can happen (in theory) even in someone who doesn't feel emotions at all. If you gather sufficient evidence that there are no polar bears near you, and your algorithm for combining prior with new experience is just a little off, then you can end up rejecting all apparent evidence of polar bears as fake, and trapping your anti-polar-bear prior. This happens without any emotional component.

Where does the emotional component come in? I think van der Bergh argues that when something is so scary or hated that it's aversive to have to perceive it directly, your mind decreases bandwidth on the raw experience channel relative to the prior channel so that you avoid the negative stimulus. This makes the above failure mode much more likely. Trapped priors are a cognitive phenomenon, but emotions create the perfect storm of conditions for them to happen.

Along with the cognitive and emotional sources of bias, there's a third source: self-serving bias. People are more likely to believe ideas that would benefit them if true; for example, rich people are more likely to believe low taxes on the rich would help the economy; minimum-wage workers are more likely to believe that raising the minimum wage would be good for everyone. Although I don't have any formal evidence for this, I suspect that these are honest beliefs; the rich people aren't just pretending to believe that in order to trick you into voting for it. I don't consider the idea of bias as trapped priors to account for this third type of bias at all; it might relate in some way that I don't understand, or it may happen through a totally different process.

Future research directions

If this model is true, is there any hope?

I've sort of lazily written as if there's a "point of no return" - priors can update normally until they reach a certain strength, and after that they're trapped and can't update anymore. Probably this isn't true. Probably they just become trapped relative to the amount of evidence an ordinary person is likely to experience. Given immense, overwhelming evidence, the evidence could still drown out the prior and cause an update. But it would have to be really big.

(...but now I'm thinking of the stories of apocalypse cultists who, when the predicted apocalypse doesn't arrive, double down on their cult in one way or another. Festinger, Rieken, and Schachter's classic book on the subject,When Prophecy Fails, finds that these people "become a more fervent believer after a failure or disconfirmation". I'm not sure what level of evidence could possibly convince them. My usual metaphor is "if God came down from the heavens and told you..." - but God coming down from the heavens and telling you anything probably makes apocalypse cultism more probable, not less.)

If you want to get out of a trapped prior, the most promising source of hope is the psychotherapeutic tradition of treating phobias and PTSD. These people tend to recommend very gradual exposure to the phobic stimulus, sometimes with special gimmicks to prevent you from getting scared or help you "process" the information (there's no consensus as to whether the eye movements in EMDR operate through some complicated neurological pathway, work as placebo, or just distract you from the fear). A lot of times the "processing" involves trying to remember the stimulus multimodally, in as much detail as possible - for example drawing your trauma, or acting it out.

Sloman and Fernbach might be the political bias version of this phenomenon. They ask partisans their opinions on various issues, and as usual find strong partisan biases. Then they asked them to do various things. The only task that moderated partisan extremism was to give a precise mechanistic explanation of how their preferred policy should help - for example, describing in detail the mechanism by which sanctions on Iran would make its nuclear program go better or worse. The study doesn't give good enough examples for me to know precisely what this means, but I wonder if it's the equivalent of making trauma victims describe the traumatic event in detail; an attempt to give higher weight to the raw experience pathway compared to the prior pathway.

The other promising source of hope is psychedelics. These probably decrease the relative weight given to priors by agonizing 5-HT2A receptors. I used to be confused about why this effect of psychedelics could produce lasting change (permanently treat trauma, help people come to realizations that they agreed with even when psychedelics wore off). I now think this is because they can loosen a trapped prior, causing it to become untrapped, and causing the evidence that you've been building up over however many years to suddenly register and to update it all at once (this might be that “simulated annealing” thing everyone keeps talking about. I can't unreservedly recommend this as a pro-rationality intervention, because it also seems to create permanent weird false beliefs for some reason, but I think it's a foundation that someone might be able to build upon.

A final possibility is other practices and lifestyle changes that cause the brain to increase the weight of experience relative to priors. Meditation probably does this; see the discussion in the van der Bergh post for more detail. Probably every mental health intervention (good diet, exercise, etc) does this a little. And this is super speculative, and you should feel free to make fun of me for even thinking about it, but sensory deprivation might do this too, for the same reason that your eyes become more sensitive in the dark.

A hypothetical future research program for rationality should try to identify a reliable test for prior strength (possibly some existing psychiatric measures like mismatch negativity can be repurposed for this), then observe whether some interventions can raise or lower it consistently. Its goal would be a relatively tractable way to induce a low-prior state with minimal risk of psychosis or permanent fixation of weird beliefs, and then to encourage people to enter that state before reasoning in domains where they are likely to be heavily biased. Such a research program would dialogue heavily with psychiatry, since both mental diseases and biases would be subphenomena of the general category of trapped priors, and it's so far unclear exactly how related or unrelated they are and whether solutions to one would work for the other. Tentatively they’re probably not too closely related, since very neurotic people can sometimes reason very clearly and vice versa, but I don't think we yet have a good understanding of why this should be.

Ironically, my prior on this theory is trapped - everything I read makes me more and more convinced it is true and important. I look forward to getting outside perspectives.

Trapped Priors As A Basic Problem Of Rationality